Big Data engineering for AI era

Raw data is a cost center. Structured, real-time, and vectorized data becomes an operational asset. We design and build high-performance data platforms, automated ETL pipelines, and scalable architectures that turn fragmented data into decision systems and AI-ready infrastructure.

Big Data services built for operational scale

We design and implement Big Data systems that turn fragmented data into a structured, reliable, and usable layer for decision-making and automation. Each service is focused on how data moves, how it is controlled, and how it creates business value.

AI data supply chain & ETL

Real-time data platforms

Data lakehouse architecture

Agentic decision intelligence

GenAI privacy & data provenance

Big Data consulting

Schedule a Free Big Data Consultation

Let’s talk about your data goals and how to turn raw information into business value.

Engineering you can audit. Code you can scale. Partners you can trust.

Why companies trust SumatoSoft

We build AI-powered data platforms that can actually handle real-world usage. Most data platforms can store, process, and generate reports. But when you connect AI, real-time decisions, or high-load operations, the system starts failing. Context is missing. Costs increase. Outputs cannot be trusted.

We handle it. Let us explain how.

Vector database orchestration

You cannot run AI on raw tables and expect accurate answers. Without a vector layer, your system cannot retrieve context properly. It guesses. That is where bad outputs come from.

We convert your data into high-dimensional embeddings and engineer vector database architectures using Pinecone, Milvus, Weaviate, and pgvector. Your system retrieves meaning, not rows, and responds with actual context.

Data governance for GenAI

If you cannot trace an output, you cannot trust it. Most systems push data into AI models without control. Sensitive information leaks. Outputs cannot be verified. Compliance becomes a risk.

We enforce automated PII redaction and full data lineage across the pipeline. Every output is linked to a specific source inside your data platform. When a result appears, you know exactly where it came from.

Data gravity and edge processing infrastructure

Moving petabytes of raw telemetry to the cloud for AI inference will bankrupt your IT budget. We move decisions to the data.

Data is processed at the source – IoT gateways, edge nodes, on-prem systems. High-volume streams are filtered, aggregated, and structured before anything reaches the cloud. Only high-value data moves upstream. Costs stay predictable. Systems stay fast.

Synthetic data generation capability

If your data is incomplete or restricted, your models will never reach production quality. Waiting for perfect datasets slows everything down. Using real data creates compliance risk.

We build generative pipelines that produce synthetic datasets with the same statistical behavior as real data. You train, test, and validate systems without exposing sensitive information. Development moves forward without waiting on data access.

Request a Project Estimate

Receive a detailed estimate for building your Big Data platform — no commitment required.

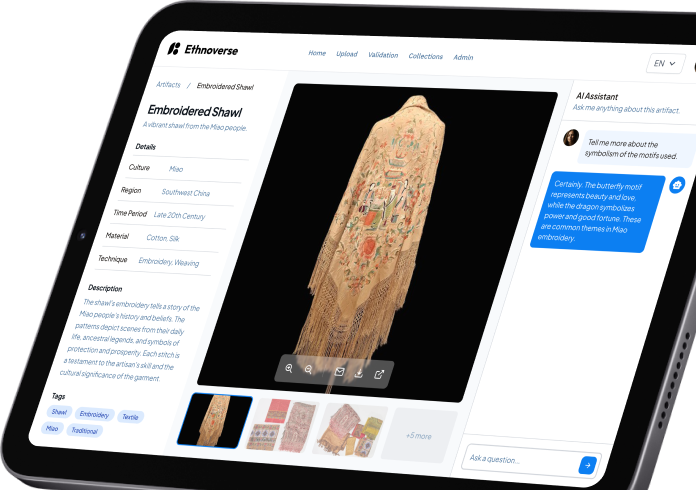

Our recent works

Technologies we work with

Databases (relational & NoSQL)

- PostgreSQL

- MySQL

- Microsoft SQL Server

- MongoDB

- Redis

- Cassandra

- AWS DynamoDB

- Apache HBase

- ClickHouse

- Neo4j

Data warehousing & OLAP

- Amazon Redshift

- Google BigQuery

- Snowflake

- ClickHouse

- Cloudera

- DataStax

Streaming & real-time processing

- Apache Kafka

- Apache Kudu

- AWS Kinesis

- Google Pub/Sub

- Apache NiFi

- MQTT / WebSockets

Monitoring & metrics

- InfluxDB

- Chronograf

- Graphite

- Prometheus

- Grafana

Analytics & business intelligence

- Google Analytics

- Power BI

- Tableau

- Looker

- Superset

- Metabase

- Grafana

In-memory caching & acceleration

- Redis

- Memcached

What it takes to build a Data-powered app

Built for high-volume and regulated environments

Big Data becomes critical where operational decisions depend on speed, precision, and scale. Each industry brings its own constraints – regulatory pressure, real-time execution, or high-volume data flows. Our solutions align directly with these conditions and support how your business operates day to day.

Finance and fintech

Real-time financial operations leave no room for delayed analysis. Our data platforms combine transaction streams, behavioral signals, and historical data into a single decision layer that operates in real time. Risk detection, credit evaluation, and anomaly identification run within the transaction flow, without introducing friction.

The result is controlled risk exposure, faster financial decisions, and full visibility across activity.

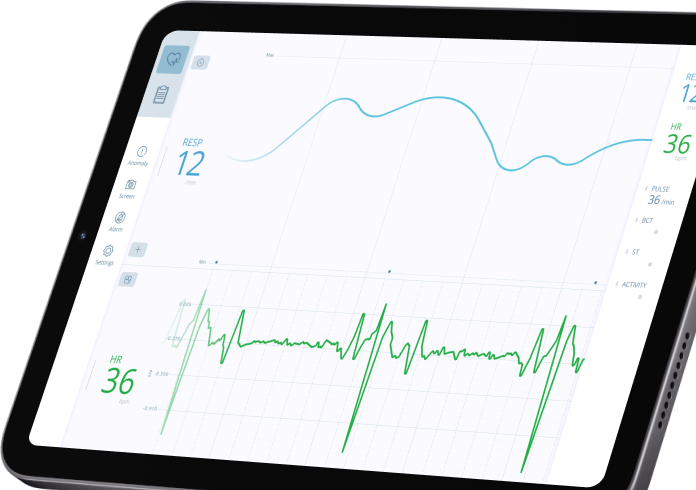

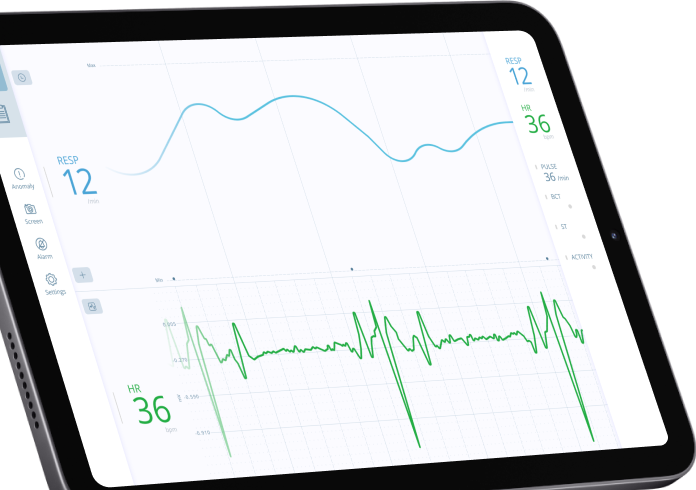

Healthcare

Medical and operational data often exist across disconnected systems, limiting their practical use. Our solutions unify these data sources into a structured environment where patient records, device inputs, and operational metrics remain consistent and accessible. This creates a stable foundation for faster coordination and reliable decision-making.

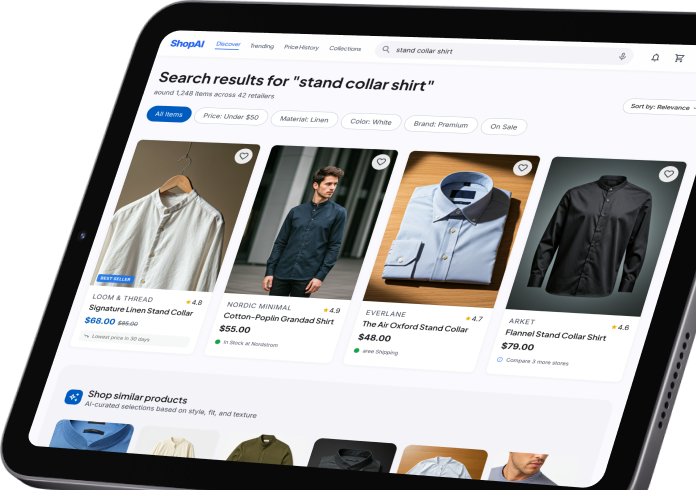

Retail and eCommerce

Our systems process user interactions as they happen and immediately apply them to pricing logic, recommendations, and inventory decisions. Data moves directly into execution, without waiting for reporting cycles. This leads to higher conversion rates, improved retention, and better inventory utilization.

Manufacturing

Industrial environments generate constant data streams that rarely translate into immediate action. Our platforms turn machine and sensor data into a continuous control layer for production. Deviations surface early, and operational decisions follow before disruptions occur.

Production remains stable, downtime decreases, and efficiency improves across facilities.

Logistics and transportation

Operational efficiency depends on adapting to constantly changing conditions. Our Big Data solutions process live data from routes, fleets, and demand signals, keeping execution aligned with real-world conditions. Planning evolves continuously instead of relying on static models.

Our big data development services allow you to reduce inefficiencies, control costs, and maintain consistent delivery performance.

Advertising and media

Campaign performance shifts faster than traditional reporting cycles can capture. Our data systems connect performance signals directly to campaign execution. Targeting, bidding, and segmentation adjust continuously based on live data.

Marketing spend becomes measurable, controlled, and responsive to actual results.

Turn Big Data into Big Results

We help you extract insights, optimize operations, and innovate faster with end-to-end data systems.

What your business gets from Big Data

Faster decisions based on real-time data

Your teams operate on live data. Market changes, operational issues, and customer behavior are identified as they happen, allowing immediate action without waiting for analysis cycles.

Lower infrastructure costs through optimized architecture

Data processing, storage, and transfer are structured to eliminate unnecessary load. Distributed pipelines, tiered storage, and edge processing reduce cloud expenses while maintaining performance at scale.

Reliable data for analytics and AI

Data pipelines enforce validation, deduplication, and consistency at every stage. Decisions and models operate on clean, structured data, reducing errors and increasing confidence across all data-driven operations.

Scalable systems that support growth

The architecture is designed to handle increasing data volumes, users, and integrations without reengineering. As the business grows, the platform continues to perform without bottlenecks or structural limitations.

Faster path to AI and automation

Your data becomes structured, accessible, and ready for advanced use cases. Predictive models, automation workflows, and AI systems can be deployed on top of your existing data foundation without rebuilding infrastructure.

Full visibility across operations

Data from systems, applications, and devices is unified into a single operational view. Leadership gains direct access to performance metrics, system behavior, and business signals.

Frequently asked questions

How do you handle “Data Gravity” when processing petabytes of data for real-time AI inference?

Moving petabytes of data to an LLM is impossible. We solve the Data Gravity problem by moving the intelligence to the data. We utilize edge-vectorization and distributed processing (Spark/Flink) to summarize and vectorize data locally at the source, transmitting only high-value semantic embeddings to the central cloud for AI reasoning.

What is the difference between a data lake and a vector database for enterprise AI?

A data lake is for storage. A vector database is for retrieval. While your data lake (like S3 or Snowflake) stores the raw “memory” of your company, we architect a vector DB layer on top of it. This layer stores semantic embeddings, allowing your LLMs to find relevant information by meaning.

How do we prevent “Garbage In, Garbage Out” in our AI models?

AI is only as smart as its context. We implement semantic data cleansing. Our pipelines use small language models (SLMs) to audit your data for reasoning quality, ensuring that the documents fed into your RAG system are high-signal, accurate, and non-contradictory.

How do we prepare our legacy SQL data warehouse for generative AI and RAG pipelines?

LLMs cannot natively query unstructured data trapped in legacy relational databases without hallucinating. We engineer semantic ETL bridges. We extract your legacy SQL data, apply semantic chunking algorithms, and sink the transformed data into a modern vector database. This allows your enterprise AI to instantly retrieve historical database context using natural language.

How do you prevent our proprietary Big Data from being leaked to public models like OpenAI?

We engineer zero-trust data gateways. Your data never leaves your secure VPC. We utilize private cloud endpoints (like Azure OpenAI) which guarantee zero-retention, meaning your data is never logged or used for model training. For absolute data sovereignty, we can deploy open-source models (like Llama 3) entirely on your bare-metal, on-premise infrastructure.

See Real Big Data Projects in Action

Explore how we’ve helped companies turn massive datasets into measurable impact.

How we deliver Big Data systems

Our delivery model is designed to move from fragmented data environments to a production-grade platform with clear control over performance, cost, and scalability. Each stage contributes directly to how the system operates in real conditions – how it is built.

A structured evaluation of your current data landscape – systems, pipelines, storage layers, and integrations – with a focus on where performance is lost and where costs accumulate.

The outcome is a prioritized execution plan that connects technical changes to business impact: faster reporting cycles, consistent metrics, and reduced infrastructure waste.

A system blueprint that defines how data is ingested, processed, stored, and accessed across the organization.

The architecture accounts for:

- Real-time vs batch workloads

- Structured and unstructured data

- Integration with existing platforms

- Future scaling requirements

This stage establishes how the platform behaves under growth, how it looks at launch.

Reliable data flow across all sources – APIs, internal systems, streaming inputs, and historical datasets.

Pipelines are built with embedded validation, deduplication, and transformation logic, ensuring that downstream systems operate on consistent and trustworthy data.

This directly affects reporting accuracy, operational decisions, and model performance.

A unified data environment combining storage, processing, and integration layers into a single operational system.

Instead of isolated tools, the platform functions as a connected infrastructure where data moves predictably between components and remains accessible across teams. This creates a stable foundation for analytics, automation, and AI use cases.

Verification of system behavior under production-like conditions:

- High data volumes

- Concurrent workloads

- Incomplete or delayed inputs

- Failure scenarios

Monitoring, logging, and alerting are configured at this stage, ensuring that system performance is measurable and controlled before full rollout.

Deployment into live operations with full observability and defined scaling mechanisms.

As data volume, usage, and integrations grow, the platform adapts without structural changes – maintaining performance while controlling infrastructure costs.

Post-launch support focuses on optimization, expansion, and long-term system efficiency.

Awards & Recognitions

Let’s start

If you have any questions, email us info@sumatosoft.com